Platform

YouTube Rolls Out AI Deepfake Detection Tool For Partner Program Creators

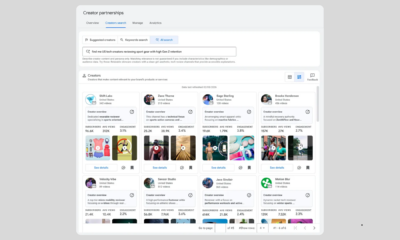

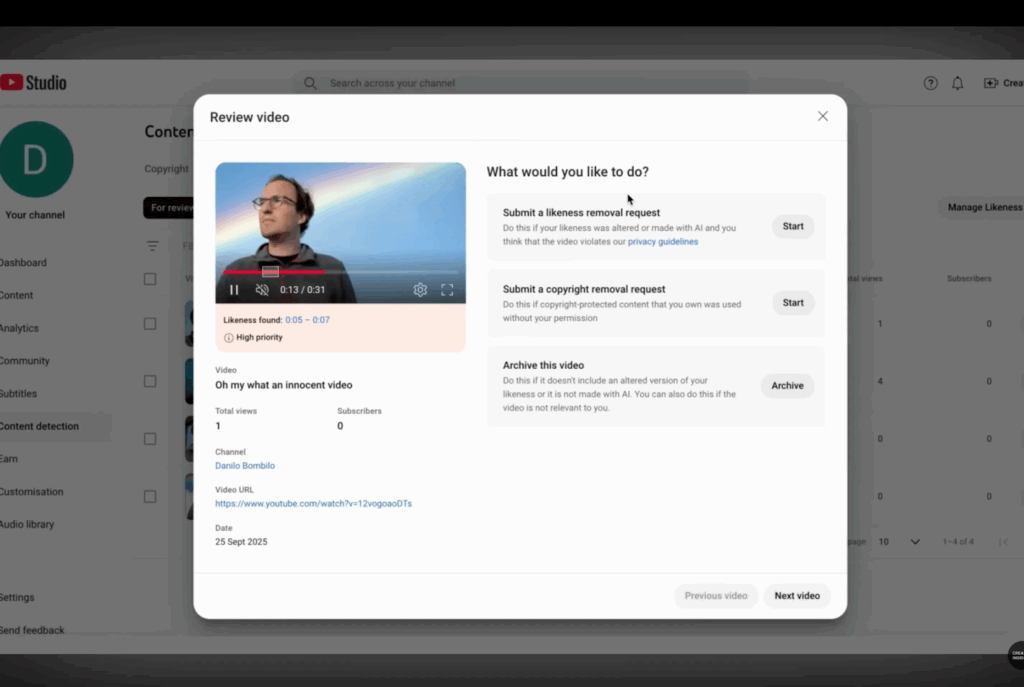

YouTube launched a new AI detection feature yesterday (October 21, 2025) for creators enrolled in its Partner Program, enabling them to identify and report unauthorized uploads that use their likeness. The tool operates through YouTube Studio’s Content Detection tab, where creators can review flagged videos after identity verification.

The platform notified the first wave of eligible creators via email and plans a phased rollout to additional creators over the coming months.

This release follows YouTube’s earlier expansion of likeness detection tools to all YouTube Partner Program creators through an open beta, announced during their “Made on YouTube 2025” event commemorating the platform’s 20th anniversary.

Current Capabilities and Limitations

In its development stage, the system may flag videos featuring creators’ actual faces rather than just synthetic versions. YouTube warns users that the tool might display their authentic content alongside potential AI-generated material.

The functionality mirrors YouTube’s existing Content ID system, which identifies copyrighted audio and visual content across the platform.

Industry Context and Previous Development

The feature’s origins trace back to an announcement last year, followed by December testing through a pilot program with talent represented by Creative Artists Agency. This initial collaboration provided “several of the world’s most influential figures” access to early-stage technology designed to identify AI-generated content featuring their likeness.

The expansion of this tool addresses recent controversies in which YouTube admitted to using machine learning to enhance creators’ Shorts without notification or consent. In August, musicians and YouTubers discovered subtle alterations to their videos, affecting their appearance and video quality.

Broader AI Content Management Strategy

This detection tool represents part of YouTube’s comprehensive approach to AI-generated content. In March 2024 the platform implemented requirements for creators to label uploads containing AI-generated or altered content.

YouTube also established strict policies regarding AI-generated music that mimics artists’ unique vocal styles. These measures come as YouTube and parent company Google develop their own AI video generation and editing tools.

The likeness detection technology aligns with CEO Neal Mohan’s assertion that “no AI tool will own the future of entertainment,” and represents YouTube’s commitment to providing creators with greater control over their digital presence amid rapid AI adoption.